If you've sat through an access control audit recently, you already know the uncomfortable truth: the findings rarely land in the areas you've invested the most. Your identity provider is configured correctly. MFA is deployed. Your IGA tool generates the reports it's supposed to generate. And yet there's one question you dread hearing from an auditor - because you're not sure you can answer it cleanly: "Can you prove who had access to what, when, and why?"

That question isn't academic. For CISOs and security leaders in regulated industries, the inability to answer it has real consequences - not just for the organization, but for you personally. When a breach occurs and the board wants to understand what went wrong, "we had roles configured" isn't a defense. The question is whether you can produce evidence that those controls were actually enforced at the moment it mattered.

The problem, more often than not, is authorization. Not authentication (who you are), but authorization (what you're allowed to do, in what context, right now). It's the layer most enterprises have underinvested in, and it's where auditors consistently find the gaps that turn compliance reviews into career-defining moments.

Broken access control has held the number-one spot on the OWASP Top 10 for two consecutive releases. In the 2025 edition, every single application tested showed some form of broken access control. Meanwhile, the Verizon 2025 DBIR found that 22% of breaches began with stolen or compromised credentials - and 88% of basic web application attacks involved their use. These aren't exotic attack vectors… They're failures of the authorization layer that sits between identity and access.

Here are the five blind spots auditors find most often - and what you can actually do about them.

1. Authorization logic is scattered across your codebase

This is the blind spot that creates all the others.

In most enterprise environments, authorization decisions aren't made by a single system. They're made by dozens - or hundreds - of individual applications, each with their own access control logic baked into the code. One service checks roles in a middleware layer. Another has if/else statements buried in a controller. A third relies on a configuration file that was last updated eighteen months ago. Nobody has a complete picture of where access decisions are actually being made.

We've seen this pattern across hundreds of security teams: custom, homegrown authorization logic is consistently the single biggest source of IAM technical debt. And the consequences go beyond technical debt. When authorization logic is scattered, you can't audit it centrally. You can't test it in isolation. And when an auditor asks "show me the policy that governs access to this resource," the answer often involves pulling in a developer to walk through application code - which is neither scalable nor confidence-inspiring.

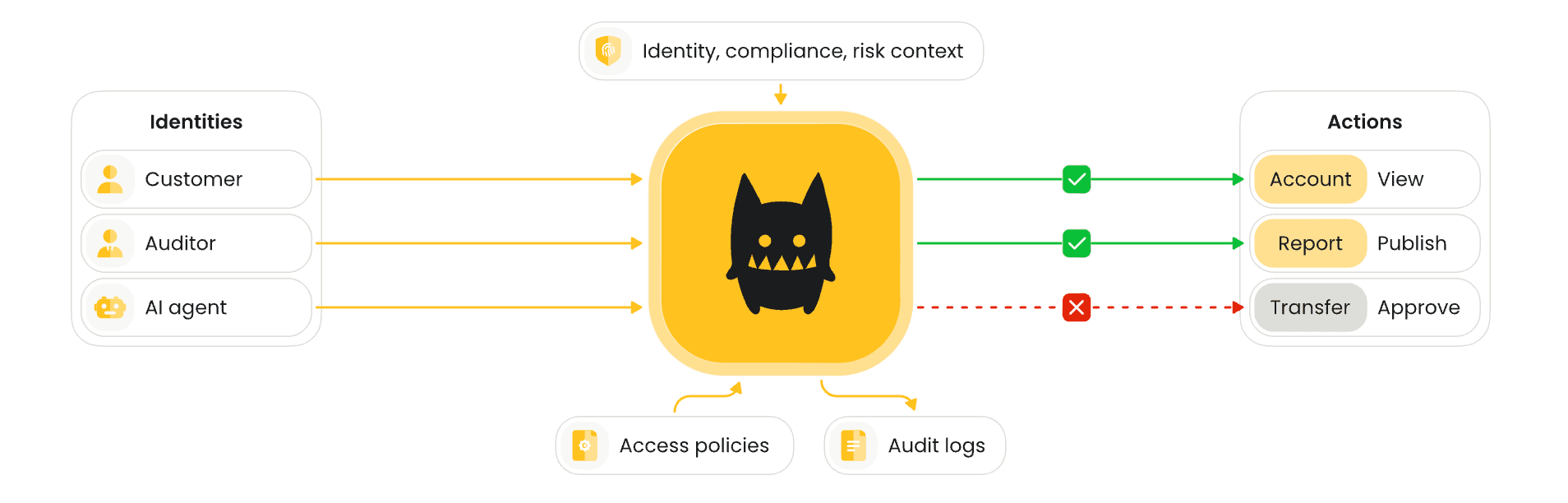

This is the fundamental problem that externalized authorization solves. Instead of embedding access control decisions inside every application, you define policies centrally and enforce them wherever the decision needs to be made - at the API gateway, within application code, at the data layer. The policy decision point (PDP) doesn't care where the enforcement point lives. What matters is that every decision is made against the same policy, and every decision is logged. Not every application lends itself to this: legacy systems and commercial off-the-shelf software may not support externalized authorization directly. But even then, you can enforce policies at the API or proxy layer in front of those systems, bringing them into the same governance model without rewriting them.

The industry is converging on what's known as the Authorization Management Platform (AMP) architecture, which follows this exact pattern: centralized policy administration with decentralized enforcement. If your authorization logic still lives inside your applications, that's the gap your auditor is going to find.

What to do now: Inventory where authorization decisions are made across your application portfolio. Identify which decisions are auditable and which aren't. That inventory alone will reveal the scope of the problem - and give you the business case to address it.

2. You can show policy, but not proof of enforcement

This one catches experienced security leaders off guard.

Most organizations can produce documentation of their access control policies. The IGA tool shows what roles exist. The access management platform shows what groups are assigned. The policy documents describe who should have access to what. On paper, it looks solid.

But auditors aren't asking what should have happened. They're asking what did happen. And the gap between documented intent and provable enforcement is where most access control audits go sideways.

IAM and governance tools are good at documenting intent. They can tell you what roles exist, what policies were defined, and who should have access according to those rules. What they often can't tell you is what was actually enforced when a real request hit a real system. When something goes wrong, teams end up reconstructing what should have happened instead of inspecting evidence of what did happen.

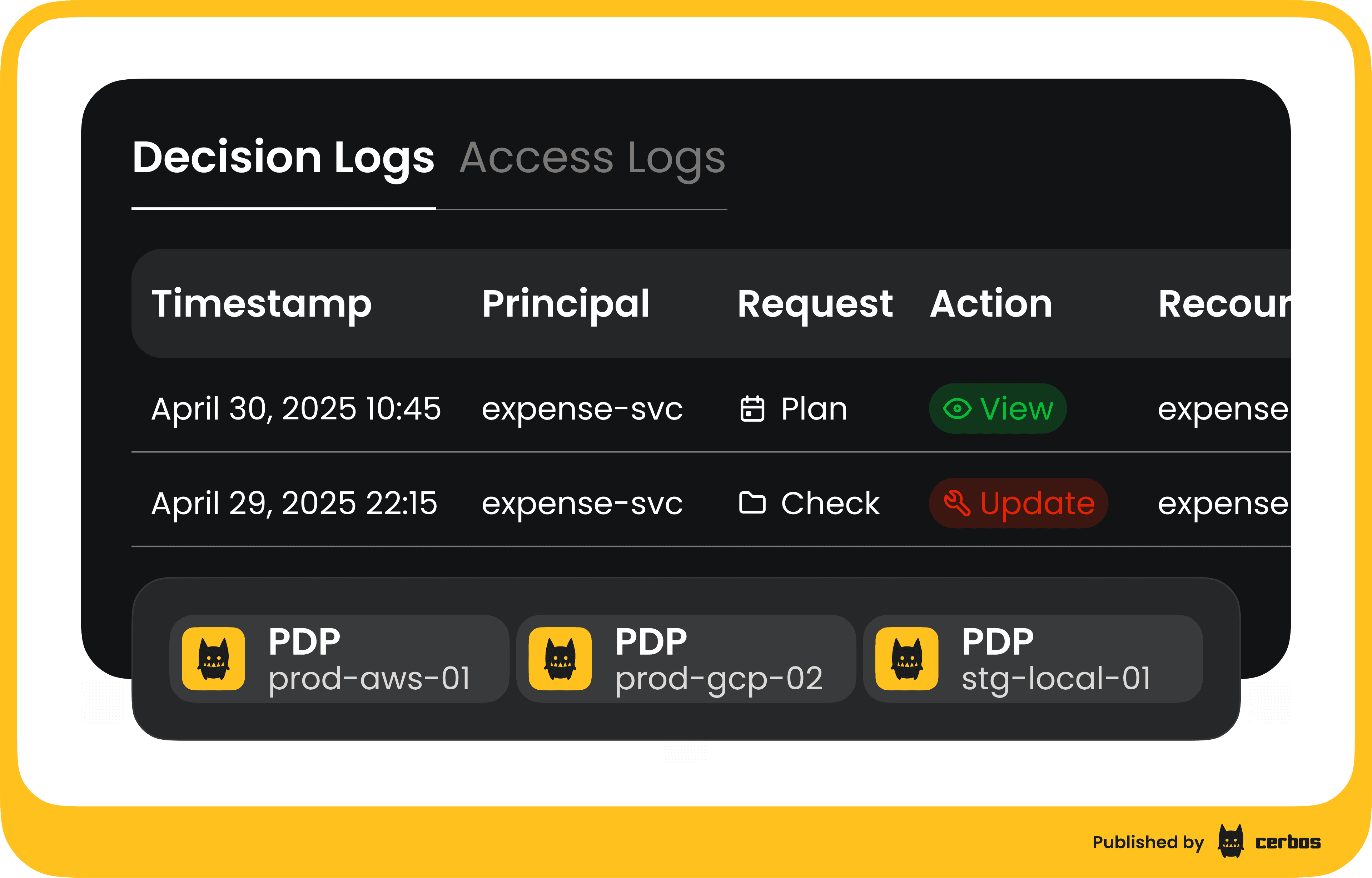

This is particularly acute during incident response. When a breach occurs, you need to answer "what could this compromised account access?" - and the answer most teams can produce is a list of systems, not the granular permissions within them. Which endpoints could they hit? Which records could they read or modify? Under what conditions? If your authorization decisions aren't logged with sufficient detail - who made the request, what resource they accessed, what action they took, the decision that was rendered, which policy version was evaluated, and a timestamp - you're working from inference rather than evidence. You can show policy, but not proof.

The fix is straightforward in concept, though it requires architectural commitment: every authorization decision should produce an auditable record. That means logging not just the outcome (allow or deny) but the full context of the decision. When authorization is externalized through a PDP, this logging comes as a structural byproduct - every request flows through a single decision point that can capture the complete picture.

What to do now: Pick your three most sensitive systems and ask: "Can I produce a complete audit trail of every access decision made against this system in the last 30 days?" If the answer involves stitching together logs from multiple sources or relying on application-level logging that may not be comprehensive, you've found the gap.

3. Access reviews are checking roles, not real permissions

Quarterly access reviews are a compliance staple. They're also one of the least effective security controls in most organizations.

Here's the pattern: IGA generates a list of users and their assigned roles. Managers click "approve" on a list of names they recognize, for roles described in terms too abstract to evaluate meaningfully. The review is completed, the checkbox is ticked, and nothing about the organization's actual security posture has changed.

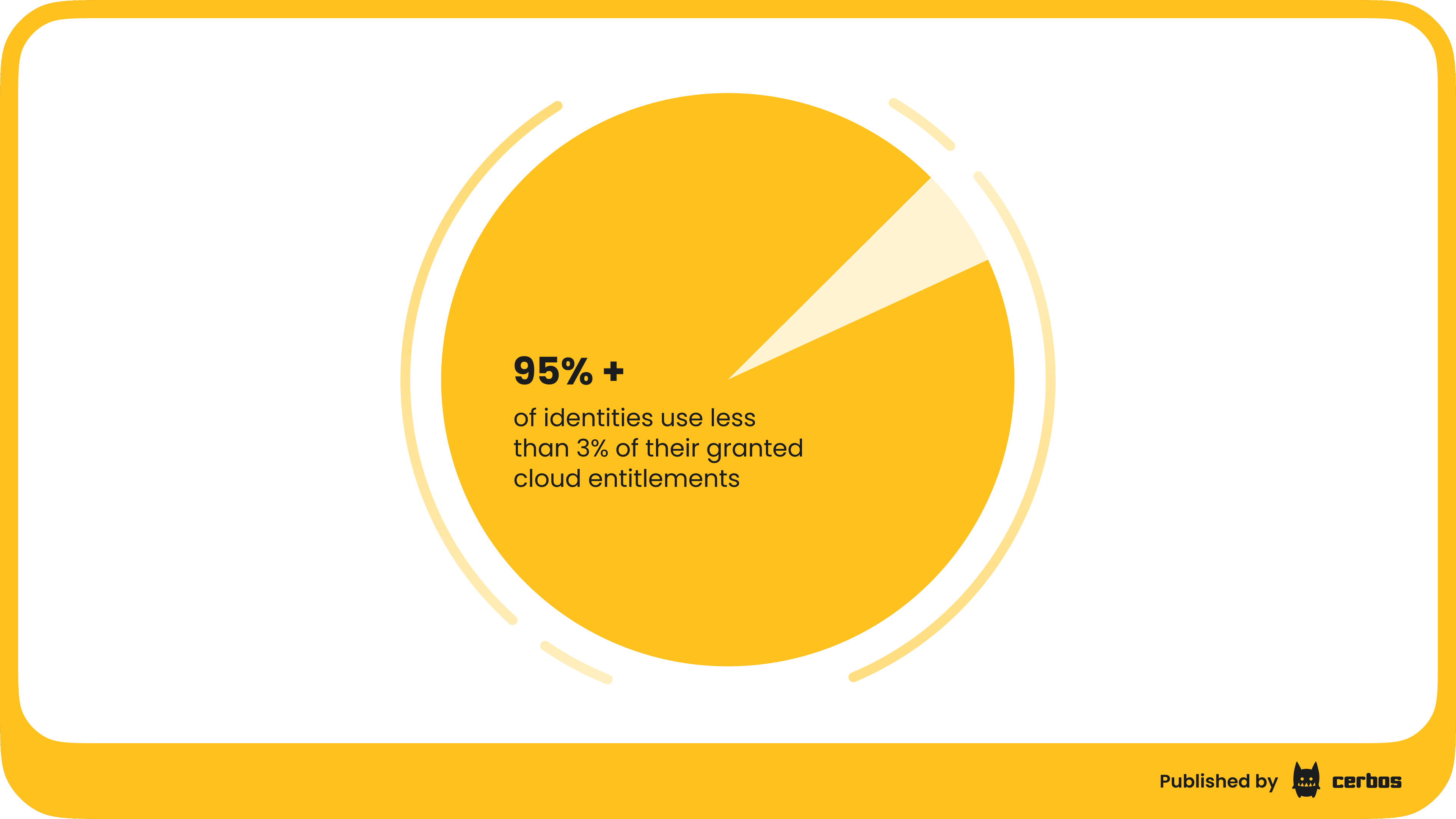

The problem is that roles don't tell you what someone can actually do. A role called "Editor" might mean different things in different applications. The permissions associated with that role may have drifted since it was last reviewed. And the real-world access someone exercises may be a fraction of what their role technically permits - research from CloudKnox (now Microsoft) shows that more than 95% of identities use less than 3% of their granted cloud entitlements. That's not a rounding error. That's a systemic over-provisioning problem that checkbox reviews will never catch.

Auditors are increasingly aware of this gap. They're not just asking whether you conducted the review - they're asking whether the review was meaningful. Did it evaluate actual entitlements or just role labels? Did it consider the principle of least privilege? Can you demonstrate that over-provisioned access was identified and remediated?

Effective access reviews require visibility into what permissions actually exist at the resource level, not just what roles are assigned. This is the difference between coarse-grained authorization (which IGA handles) and fine-grained authorization (which most enterprises still lack). When your authorization system can tell you exactly what actions a given identity is permitted to take on specific resources, your access reviews become meaningful security controls rather than compliance theater.

What to do now: Audit the gap between assigned roles and actual permissions in your highest-risk applications. If you can't map a role to specific, resource-level entitlements, your access reviews aren't evaluating what auditors care about.

4. Non-Human Identities are authorized without oversight

Here's a number that should concern every cybersecurity specialist: non-human identities outnumber human identities by roughly 45 to 1 in most enterprise environments. Service accounts, API keys, automation scripts, CI/CD pipelines - they're the connective tissue of modern infrastructure. And in most organizations, they're authorized with less rigor than a junior employee's laptop.

The numbers from recent industry research are stark: 94% of security leaders report an increase in machine identities, and 50% of organizations experienced security breaches tied to compromised machine identities in the past year. Meanwhile, 68% of organizations lack identity security controls for AI entirely.

The authorization gap for non-human identities is particularly dangerous because these identities often operate with standing privileges that were granted at creation time and never revisited. A service account created for a migration project two years ago may still have write access to production databases. An API key issued to a contractor's integration may still be active months after the engagement ended. These are the access paths auditors probe, and they're the ones most likely to be exploited.

The solution isn't just better inventory management (though that's a prerequisite - you can't govern what you can't see). It's applying the same authorization rigor to machine identities that you apply to human ones: fine-grained, context-aware policies that evaluate each access request based on who (or what) is asking, what they're trying to access, and whether the current context justifies it. Short-lived, task-scoped credentials rather than long-lived static tokens. And authorization decisions that are logged and auditable, so you can answer the auditor's question about that service account with evidence rather than assumptions.

What to do now: Identify all service accounts and API keys with access to your most sensitive systems. For each one, determine: who owns it, when its permissions were last reviewed, and whether those permissions are the minimum necessary for its current function. The answers will almost certainly surface orphaned or over-privileged non-human identities that represent both audit findings and real security risk.

5. AI agents have been deployed without an authorization model

This is the blind spot that's emerging fastest, and it's the one most likely to define the next generation of access control audit findings.

AI agents have gone from "interesting demo" to board-level initiative at an alarming pace. PwC's 2025 AI Agent Survey found that 79% of organizations have already adopted AI agents to some extent, and 88% of executives plan to increase AI budgets specifically because of agentic AI. Yet McKinsey's 2025 State of AI survey found that only 23% are actively scaling agentic systems - the rest are stuck in experimentation. The board has bought into AI. Budget is flowing. Agents are being built or bought. And in many organizations, the security team found out somewhere between "proof of concept" and "we're going live next month."

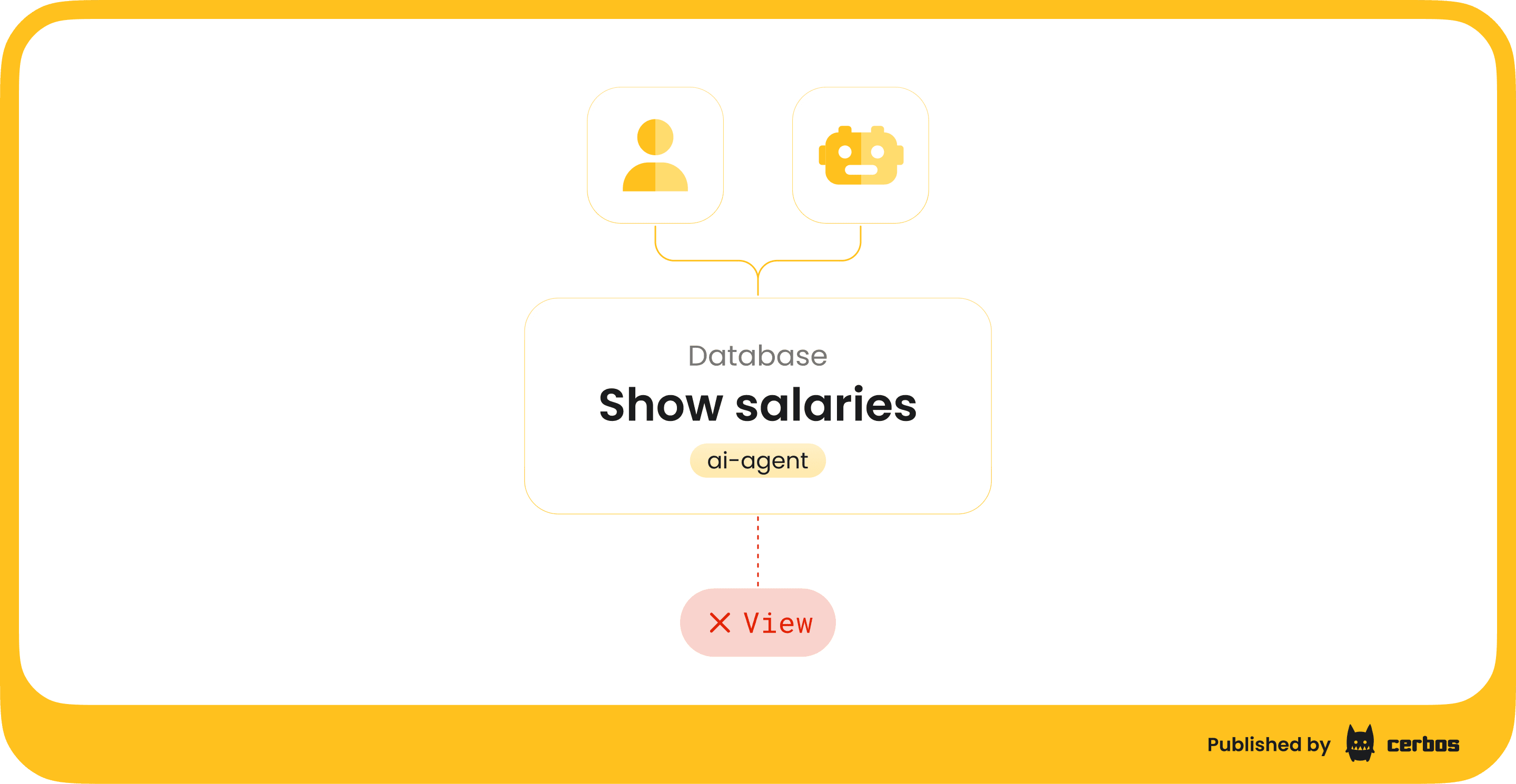

The authorization challenge with AI agents is fundamentally different from human users. These agents need to make API calls, access data stores, invoke tools, and act on behalf of users - all at machine speed, with delegation chains that are difficult to trace. Traditional role-based access control simply cannot handle the requirements: fine-grained access decisions, context-aware evaluation, delegation of authority with human-in-the-loop flows, and authorization requests that carry enough context for a policy engine to make a good decision.

The consensus emerging from IAM industry events and from our own work with security teams is clear: fine-grained, context-aware authorization is the only viable model for AI agents. The architecture that's gaining traction for securing AI agent tool calls - including MCP (Model Context Protocol) server access - places a policy enforcement point in-line, querying a PDP for every tool invocation. The principal is the agent (with its delegation chain). The resource is whatever the tool is accessing. The action is the tool call itself. The context is everything the PDP needs to make the decision.

And here's where it connects to your career risk as a security leaders: Gartner predicts over 40% of agentic AI projects will be canceled by end of 2027 due to escalating costs, unclear business value, or inadequate risk controls. If your organization is among them, the question isn't just "why did the AI project fail?" It's "who was responsible for securing it?" The EU AI Act already requires that authorization decisions involving AI are explainable and traceable to specific policies. There is no AI exemption to existing regulations.

The organizations building their authorization layer for AI now - with proper policy enforcement, audit trails, and kill-switch capabilities - will ship agents faster and more safely. Those that don't will face audit findings they aren't prepared for, or worse, incidents where an AI agent accessed data it shouldn't have and nobody can explain how or why.

What to do now: If your organization has deployed or is planning to deploy AI agents, ask three questions: Does each agent have a unique identity? Are agent actions authorized at the API/resource level (not just at the prompt level)? Can you produce an audit trail of every action an agent has taken? If the answer to any of these is "no" or "I don't know," you have an authorization gap that's growing by the day.

The common thread: Authorization is infrastructure, not a feature

These five blind spots share a root cause. Authorization has been treated as a feature inside individual applications rather than as its own layer of infrastructure. The industry is catching up - the latest IAM reference architectures now place Authorization Management Platforms as their own tool category alongside IGA, PAM, Access Management, and ITDR. That's a significant recognition: authorization isn't a checkbox inside your access management platform. It's a distinct architectural concern that requires its own tooling, its own standards, and its own investment.

The shift toward externalized, policy-as-code authorization - where policies are version-controlled, testable, and auditable - aligns with how every other critical infrastructure component is managed in modern enterprises. Your infrastructure-as-code is in Git. Your CI/CD pipelines are automated and auditable. Your authorization policies should be too.

The emerging AuthZEN standard (finalized in January 2026, with 18+ certified implementations) is making this interoperability real at the protocol level, standardizing how policy decision points and policy enforcement points communicate. This is the connective tissue that allows you to swap components, enforce consistently across environments, and demonstrate to auditors that your controls are systematic rather than ad hoc.

What closing these gaps actually looks like

Understanding the blind spots is step one. The harder question is: what does the fix look like in practice, without requiring a multi-year rearchitecture project or burning out a team that's already stretched thin?

This is the problem Cerbos was built to solve. Cerbos is an enterprise-grade authorization management platform designed to secure access across complex, distributed environments, SaaS products, and regulated systems. It externalizes authorization logic from application code, making enterprise access control consistent and centrally managed across all your services - whether those services are accessed by humans, machine identities, or AI agents.

Here's why that matters for the blind spots we've been discussing.

Each of the five gaps comes down to the same architectural deficit: authorization decisions are made in too many places, with too little visibility, and no consistent way to prove what happened. Cerbos addresses this by providing a complete authorization system built from three connected components:

The Policy Decision Point (PDP) is the authorization engine - it evaluates access control logic and returns allow or deny decisions to your services. It's open source, stateless, and lightweight, meaning it runs wherever you need it: in containers, Kubernetes clusters, on-premise, air-gapped environments, or at the edge. The PDP handles millions of authorization checks per second with predictable, sub-millisecond latency. This is what makes real-time, fine-grained authorization feasible - not just for human users, but for the machine identities and AI agents that are generating access requests at machine speed.

Enforcement Point SDKs are lightweight libraries that enforce authorization decisions directly within your applications and APIs. They provide a simple, language-agnostic interface for calling the PDP in real time. The SDKs work with any identity provider, which means you don't have to rip out your existing authentication stack - you're adding the authorization layer that's been missing.

Cerbos Hub is the policy administration point - the authorization management layer for authoring, testing, deploying, and auditing authorization policies at scale. This is where security teams get the visibility that auditors demand: a unified audit trail across services, agents, workloads, and tenants, with every decision linked to the exact policy version that produced it. It's also where you get the control that makes your job defensible - centralized policy management with version history, automated testing in CI pipelines before any policy change goes live, and real-time monitoring of authorization activity across your entire environment.

Cerbos Synapse is the newest component that completes the platform. It gathers identity, resource, and relationship data from teams’ existing systems - identity providers, databases, graph databases, internal APIs - and delivers complete context to the policy engine before every authorization decision. Applications send a user ID; Synapse returns a fully enriched request. It also speaks the protocols that infrastructure systems like Envoy, Kafka, Trino, and Kubernetes already use, so those systems connect to Cerbos natively without custom adapters.

Together, these four components give enterprise teams a single governed layer for every authorization decision.

Cerbos supports multiple access control models - RBAC, ABAC and PBAC - which means you can model permissions the way your business actually works, rather than forcing everything into rigid role hierarchies. It's designed for Zero Trust architectures and AI-driven systems, providing continuous, policy-based authorization that scales from a local deployment to global production. And because policies are written in human-readable YAML and managed as code in Git, they're reviewable by security teams and compliance officers - not just developers.

For the CISO evaluating this, the question that matters most isn't "what does it do?" It's "can I defend this decision to my board?" Cerbos is SOC 2 and SOC 3 certified, ISO 27001 certified, and built to support compliance with GDPR, PCI DSS, and HIPAA. Organizations in fintech, insurance, energy, and other regulated industries use it today. It deploys without requiring overtime from your team or a twelve-month implementation timeline. And because the PDP is open source, you can evaluate it on your own terms before making a commitment.

Where to start

If you've recognized your organization in any of these five blind spots, you're not alone. Most enterprises are somewhere between awareness and action on authorization. Here's a pragmatic starting point:

First, conduct an authorization inventory. Where are access decisions made today? By what mechanism? Are they logged? Are they auditable? This exercise alone surfaces the gaps that matter most.

Second, prioritize by risk. Start with your most sensitive systems, your highest-risk identities (including non-human ones), and any AI agent deployments. These are where audit findings will have the most impact - on your compliance posture and on your career.

Third, evaluate externalized authorization. The policy-as-code approach - centralized policy administration, decentralized enforcement, complete audit trails - addresses the root cause of all five blind spots. It's the architectural pattern that leading IAM frameworks recommend, the approach that aligns with Zero Trust principles, and the foundation you'll need as AI agents move from pilot to production.

The security leader who can walk into a board meeting and say "I can show you exactly who accessed what, when, why, and under what policy - for every human user, every service account, and every AI agent" isn't just audit-ready. They're the one who sleeps at night.

And in this role, that's worth more than any feature on a vendor's slide deck.

Want to see how Cerbos closes these authorization gaps for security teams in regulated industries? Explore on your own, or feel free to book a free call with our team.

Feel free to download our IAM security checklist for 2026 - Find the gaps in your IAM posture before an auditor or attacker does. 9 risk domains. AI agent security included.

FAQ

Tagged in