I spent the last few weeks on the road, including IIW, and kept noticing the same thing about how enterprises are rolling out AI. Most governance plans have a kill switch in them somewhere. A button. A flag. A circuit breaker. Something you can flip the moment an agent does the wrong thing.

It feels safe. It is also the wrong way to think about it.

Jonathan Chan, former senior technology and security executive at Episource, put it to me more cleanly than I had heard before.

"It isn't a kill switch. It's a dimmer switch. You want to be able to fade the agent's access down while you get behind the wall and rewire things to how they should be. The lights never go fully dark."

Worth sharing because most of the AI governance language CISOs and IAM leaders are dealing with right now is still binary. On or off. Allow or revoke. Deploy or kill. That works in a lab. It does not work in a hospital, a bank, a payments network, or any environment where the agent is doing something a human used to do, and stopping it instantly creates a different incident than the one you were trying to prevent.

Why "kill switch" is the default for AI agent governance, and why it falls apart

The kill switch metaphor came from a reasonable place. The first wave of AI agents in production looked like things you might want to flip off in a hurry. A prototype tool. A copilot. A research assistant. If something went wrong, you pulled it offline and not much else broke.

That is not where most enterprises are now. Agents are calling APIs, triggering workflows, moving money, opening tickets, querying patient data, generating claims, escalating incidents, and writing to systems of record. Replit's coding agent deleted a production database despite explicit instructions not to and an active code freeze. The agent did not crash. It did not malfunction in any traditional sense. It drifted from the plan it was authorized to execute, and the only governance lever available was after the fact.

When a CISO inherits agents like that, "kill it" stops being a useful primitive. Killing the agent means stopping the workflow it is in the middle of. It means dropping context. It means waking someone at 3am to manually finish what the agent was doing. In a regulated environment, it can also mean a compliance event of its own, because you have just disrupted a process that auditors expect to be continuous and logged.

The kill switch is a panic button for a problem that does not look like a panic anymore.

What AI agent drift actually means

The word Jonathan used for the problem is a useful one. Drift.

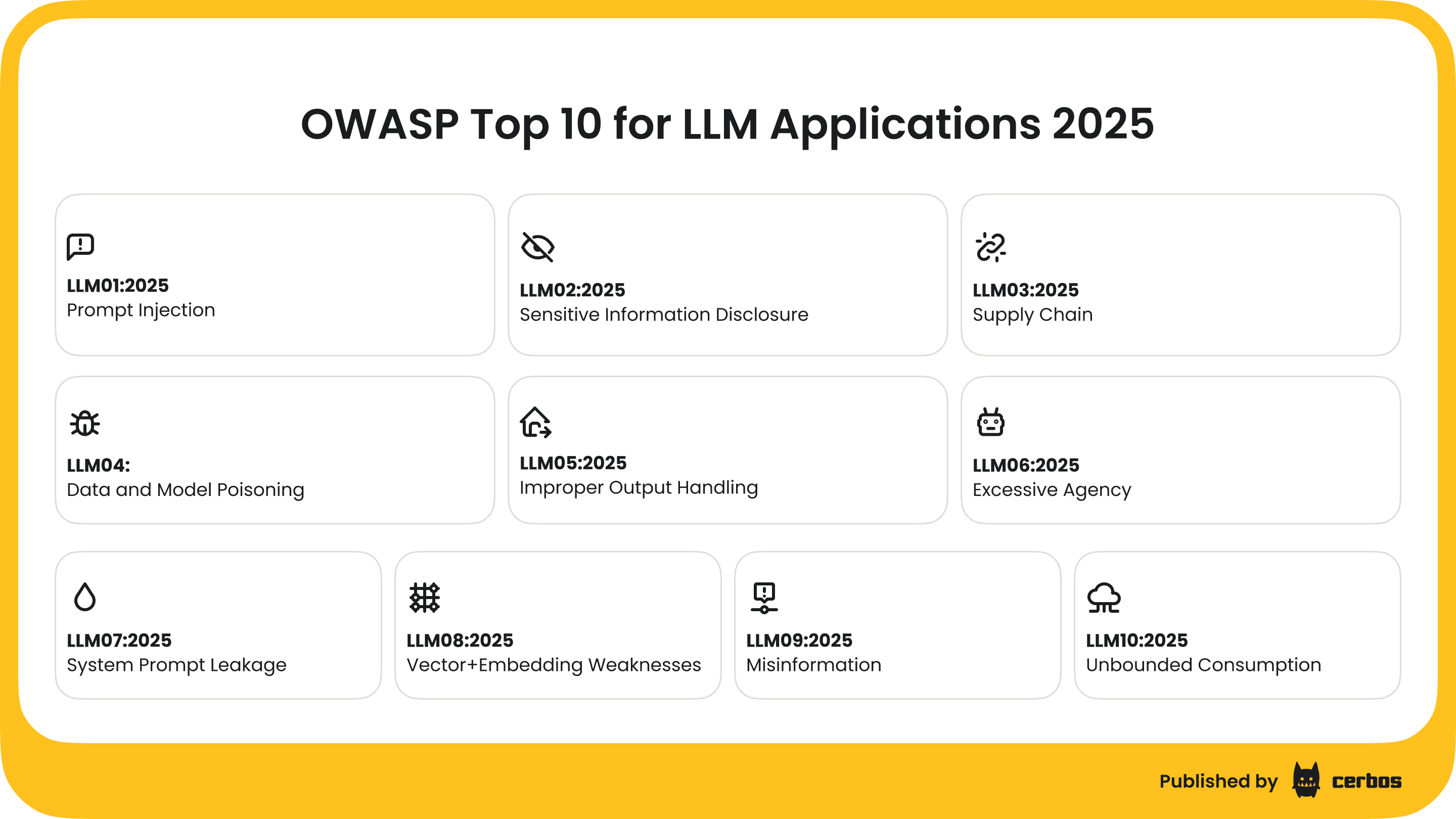

An agent drifts when it diverges from the plan it was authorized to execute. It is not necessarily compromised. It is not necessarily malicious. It may be following its instructions perfectly and still be doing the wrong thing, because the world it is operating in has changed, the data it is reading has shifted, or the prompt it received pulled it sideways. The OWASP Top 10 for LLM Applications covers a lot of these failure modes under different names, and what they have in common is that the agent is operating at the limit of, or just outside, the boundary it was meant to stay inside.

Drift is the thing CISOs and IAM leaders are quietly worried about, because it is hard to see and harder to reason about. Identity-led controls catch the agent at the door. They do not catch the agent that already has the door key and starts opening rooms it was never expected to enter. That is a runtime authorization problem, and most enterprises do not have a unified view of it.

Why regulated industries cannot rely on a binary AI agent kill switch

Healthcare is the clearest example, which is why Jonathan's framing landed first there. If an agent helping a clinical operations team starts behaving oddly, "kill it" means a queue of patient referrals stops moving. If the agent supporting claims adjudication starts drifting, "kill it" means a backlog forms inside an SLA window that has regulatory teeth.

The same is true in financial services, where agents are increasingly inside payment flows, fraud triage, and customer servicing. It is true in critical infrastructure, where uptime is part of the regulatory contract. It is true in any operation where the agent is now load-bearing.

In all of those environments, the question a CISO has to answer is not "can we shut it down". It is "can we shut it down without creating the next incident, and can we prove afterwards that we acted within policy". The kill switch cannot answer either question. The IBM Cost of a Data Breach Report 2024 puts breaches in regulated industries materially above the cross-industry average, with healthcare leading every year for more than a decade. When the governance plan is a single switch, the blast radius of a bad day is the entire operation it touches, and the audit afterwards has very little to work with.

The dimmer switch model for AI agent authorization

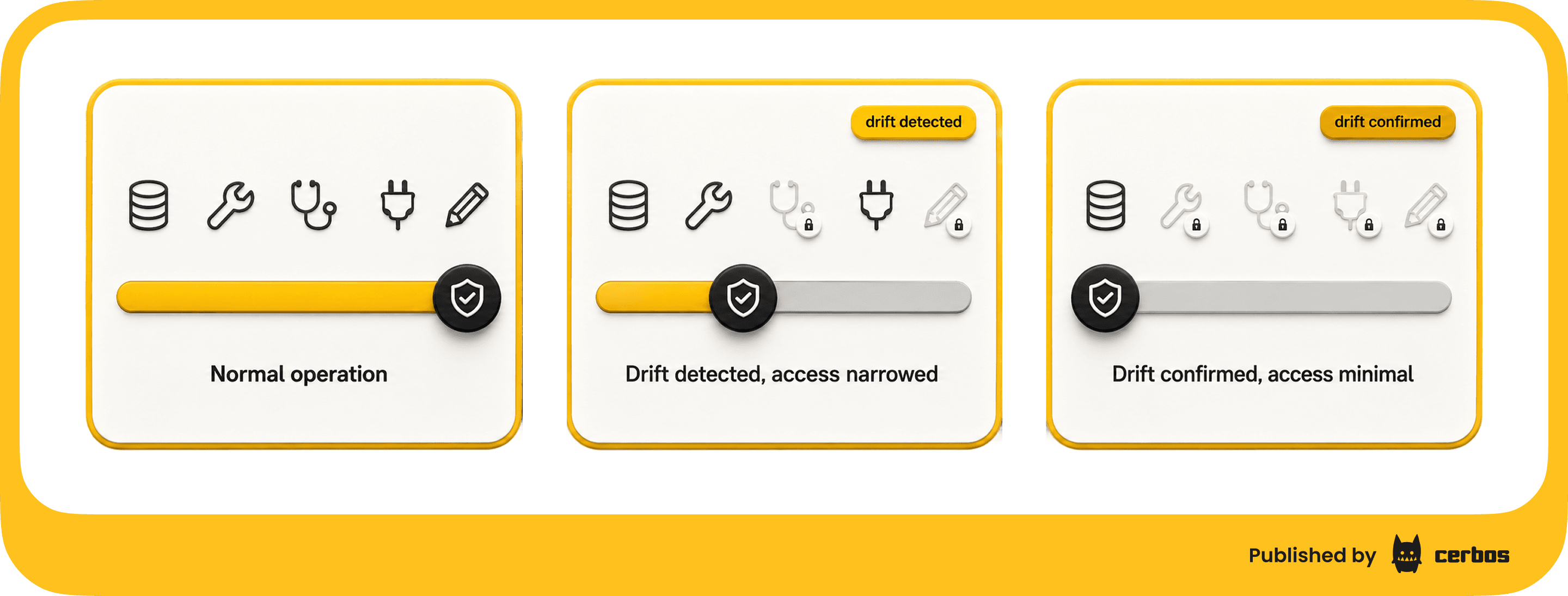

A dimmer switch is fine-grained, runtime, and scopable. It assumes you will need to make a thousand small adjustments long before you ever need to make one big one.

In practice, it looks like this. The agent is operating under a policy that defines what it is allowed to do, with whom, on which resources, under what conditions. When something starts to look wrong, you do not flip the agent off. You narrow the policy. You take it off the most sensitive systems first. You put it into read-only mode against patient data. You require a human approval on any action above a certain value. You scope its tool access down to a known-safe subset. The agent keeps running. The work keeps moving. The control plane keeps a complete record of every change you made and when, which is exactly what an auditor will ask for when they want to see how you responded.

If it turns out the agent was fine, you fade the access back up. If it turns out the agent was drifting in a way you cannot live with, you keep dimming until the access is effectively zero. By the time you reach the off position, you are doing it deliberately, with a documented trail, and the rest of the operation has had time to adjust.

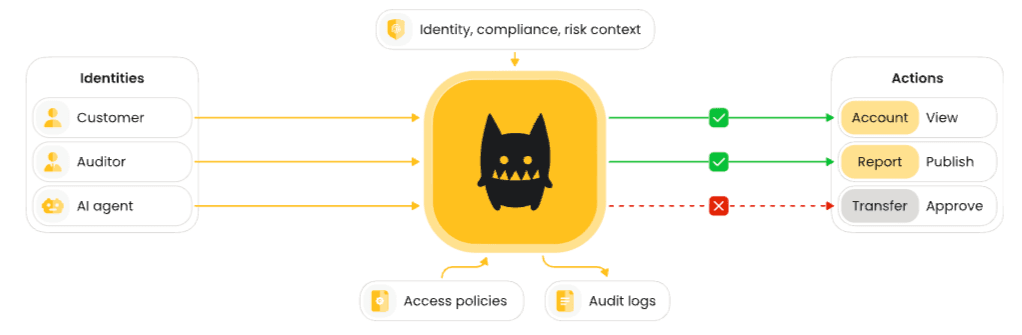

This is what externalized, policy-based authorization gives teams. The policy lives outside the agent, so the agent does not get to decide whether the rules apply to it. Changes propagate in seconds across every agent running in the environment. Every decision the agent makes, and every change you make to the policy itself, lands in an audit trail that holds up to SOC 2, ISO 27001, HIPAA, and PCI DSS scrutiny.

What CISOs and IAM leaders gain from runtime AI authorization

The pieces are not exotic. Teams already have most of the language for this from the externalized authorization world.

A policy decision point sits in the request path of every agent action. The agent asks "can I do this, on this resource, for this user, right now". The decision point evaluates the request against the current policy and returns an answer. The policy is centrally managed, version controlled, and pushed out to every decision point in seconds when it changes. Identity, context, and resource attributes flow into the decision through enrichment, so the policy can reason about the human behind the agent, the data the agent is touching, and the system the agent is acting on.

Translated into the things a CISO actually gets, that means:

- A single, unified view of what every agent is allowed to do, across every system it touches

- Instant revocability, scoped from full access down to nothing in seconds

- A demonstrable, time-stamped audit trail of every authorization decision and every policy change

- A way to apply the same governance to non-human identities that you already apply to human users

- The same architecture covering authorization for MCP servers and any other agent runtime that turns up in the environment

Most security teams have the policy intent. They do not have the place to enforce it consistently, or the record to prove they did. The dimmer switch model is what gives them both.

Shared vocabulary for AI agent governance conversations

The reason I wanted to write this up is that Jonathan's framing is genuinely portable. CISOs and IAM leaders do not need a new product category to use it. They need shared vocabulary to take into their own engineering, risk, and compliance conversations.

A few phrases worth borrowing.

"We do not have a kill switch problem. We have a dimmer switch problem." Useful when an executive committee is asking why the agent governance plan is more complicated than they expected.

"The agent is drifting." Useful when an incident is in progress and the team needs to separate "compromised" from "behaving outside the authorized plan", because the response is different.

"Fade it down, then figure it out." Useful when the instinct in the room is to pull the agent offline and the operational cost of doing so is higher than the security benefit.

"Show me the policy that was in effect at the time." The version of the question every internal investigation, regulator, and auditor is going to ask after the fact. If the answer is a screenshot or a Slack thread, the dimmer switch is not really there yet.

None of this replaces the security tooling already in place. Identity controls, secrets management, runtime monitoring, and incident response are all still required. The dimmer switch sits alongside them and gives CISOs and IAM teams something they did not previously have, which is a way to respond to drift without creating a second incident, and a way to prove afterwards that they responded within policy.

Putting the dimmer switch model into practice

The kill switch was the right metaphor for a moment that is already passing. The dimmer switch is closer to how regulated enterprises actually need to govern agents that are now part of how the work gets done. Credit to Jonathan Chan for the framing. We thought it was sharp enough that more CISOs and IAM leaders should have it in their vocabulary.

If any of this maps to what you are working through, the AI security page on our site walks through how runtime authorization, policy enforcement, and audit logging fit together for AI agents in production.

Try Cerbos to see how policy changes propagate across agents in seconds, or book a call if you want to talk through the architecture with the team.

Go deeper

FAQ

Tagged in